- #HOW TO INSTALL APACHE SPARK ON WINDOWS 8 SOFTWARE#

- #HOW TO INSTALL APACHE SPARK ON WINDOWS 8 LICENSE#

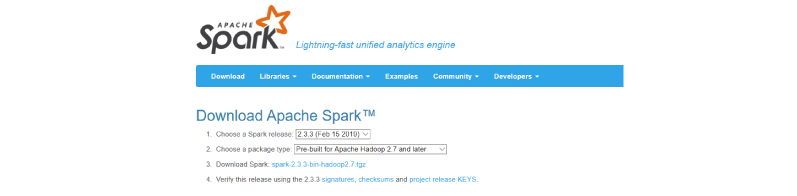

- #HOW TO INSTALL APACHE SPARK ON WINDOWS 8 DOWNLOAD#

# first, to avoid the notorious 'permgen' error # increase the amount memory available to the JVM: export MAVEN_OPTS = "-Xmx1300M -XX:MaxPermSize=512M -XX:ReservedCodeCacheSize=512m" # then trigger the build:

#HOW TO INSTALL APACHE SPARK ON WINDOWS 8 SOFTWARE#

#HOW TO INSTALL APACHE SPARK ON WINDOWS 8 DOWNLOAD#

download 2.9.3 binaries for your OS from here (don't click the download link on top, scroll down to find deb download link, or simply use this direct download link).Having problems with Java setup? Check the latest Ubuntu Java documentation. # or only for the current user # echo "JAVA_HOME=/usr/lib/jvm/java-6-oracle/" > ~/.pam_environment

Sudo echo "JAVA_HOME=/usr/lib/jvm/java-6-oracle" > /etc/environment # check where it currently points echo $JAVA_HOME # if you're doing this on a fresh machine # and you just installed Java for the first time # JAVA_HOME should not be set (you get an empty line when you echo it) # either set it system wide #

.png)

We probably don't need Oracle's Java, but I had some weird problems while building Spark with OpenJDK - presumably due to the fact that open-jdk places jars in /usr/share/java, I'm not really sure, but installation of oracle-java effectively solved all those problems, so I haven't investigated any further what exactly happened there. Since we need oracle's version of java, we'll need to install it and set it to be the default JVM on our system.

#HOW TO INSTALL APACHE SPARK ON WINDOWS 8 LICENSE#

Since Oracle, after they acquired Java from Sun, changed license agreement (not in a good way), Canonical no longer provides packages for Oracle's Java. Prereqsįirst, let's get the requirements out of the way. There are currently two types of RDDs: parallelized collections, which take an existing Scala collection and run operations on it in parallel, and Hadoop datasets, which run functions on each record of a file in HDFS (or any other storage system supported by Hadoop). RDDs' main purpose is to support higher-level, parallel operations on data in a straightforward manner. Resilient Distributed Dataset is a collection that has been distributed all over the Spark cluster. ParallelCollection 88 eebe8e // repl output What the hell is RDD? parallelize ( data ) // sc is an instance of SparkCluster that's initialized for you by Scala repl // returns Resilient Distributed Dataset: distData : spark.RDD = spark. parallelized collection example // example used Scala interpreter (also called 'repl') as an interface to Spark // declare scala array: scala > val data = Array ( 1, 2, 3, 4, 5 ) // Scala interpreter responds with: data : Array = Array ( 1, 2, 3, 4, 5 ) // distribute the array in a cluster: scala > val distData = sc. Here's a quick example of how straightforward it is to distribute some arbitrary data with Scala API: Scala API provides a way to write concise, higher level routines that effectively manipulate distributed data. It's written mainly in Scala, and provides Scala, Java and Python APIs. It is currently incubated at Apache and improved and maintained by a rapidly growing community of users, thus it's expected to graduate to top-level project very soon. Spark was conceived and developed at Berkeley labs. The official one-liner describes Spark as "a general purpose cluster computing platform".

standalone cluster setup (one master and 4 slaves on a single machine).installation of all Spark prerequisites.The tutorial covers Spark setup on Ubuntu 12.04: This tutorial was written in October 2013.Īt the time, the current development version of Spark was 0.9.0. 50% off code: mlbonaci spark-in-action.html /JBE8vldPZc Just got affiliate link (8%) from the publisher for my Spark in Action book.